Journal Description

Information

Information

is a scientific, peer-reviewed, open access journal of information science and technology, data, knowledge, and communication, and is published monthly online by MDPI. The International Society for Information Studies (IS4SI) is affiliated with Information and its members receive discounts on the article processing charges.

- Open Access— free for readers, with article processing charges (APC) paid by authors or their institutions.

- High Visibility: indexed within Scopus, ESCI (Web of Science), Ei Compendex, dblp, and other databases.

- Journal Rank: CiteScore - Q2 (Information Systems)

- Rapid Publication: manuscripts are peer-reviewed and a first decision is provided to authors approximately 18 days after submission; acceptance to publication is undertaken in 2.9 days (median values for papers published in this journal in the second half of 2023).

- Recognition of Reviewers: reviewers who provide timely, thorough peer-review reports receive vouchers entitling them to a discount on the APC of their next publication in any MDPI journal, in appreciation of the work done.

Impact Factor:

3.1 (2022);

5-Year Impact Factor:

2.9 (2022)

Latest Articles

An Interactive Pedagogical Tool for Simulation of Controlled Rectifiers

Information 2024, 15(6), 327; https://doi.org/10.3390/info15060327 (registering DOI) - 4 Jun 2024

Abstract

Active learning approaches, incorporating student engagement through experimentation and problem solving, effectively foster higher-level thinking abilities and enhance academic performance. Interactive tools like simulators align with these methodologies, but commercially available simulators have limitations; particularly, their high cost and lack of customization features

[...] Read more.

Active learning approaches, incorporating student engagement through experimentation and problem solving, effectively foster higher-level thinking abilities and enhance academic performance. Interactive tools like simulators align with these methodologies, but commercially available simulators have limitations; particularly, their high cost and lack of customization features pose significant challenges for many educational institutions. This article presents CORES, a web-based educational application designed to simulate controlled rectifier circuits. CORES eliminates the need for intricate circuit assembly and software installation by providing pre-built circuits so that users can concentrate on analyzing circuit behavior by manipulating the thyristor firing angle and load characteristics, while the application generates output voltage and current waveforms under steady-state conditions, minimizing computation time. CORES has proven to be a valuable pedagogical tool, surpassing commercial simulators in terms of accessibility, ease of use, and enriched learning experiences for power electronics students and educators.

Full article

(This article belongs to the Special Issue Technology, Learning and Teaching of Electronics with Information Applications)

►

Show Figures

Open AccessArticle

A Comparison of Bias Mitigation Techniques for Educational Classification Tasks Using Supervised Machine Learning

by

Tarid Wongvorachan, Okan Bulut, Joyce Xinle Liu and Elisabetta Mazzullo

Information 2024, 15(6), 326; https://doi.org/10.3390/info15060326 - 4 Jun 2024

Abstract

Machine learning (ML) has become integral in educational decision-making through technologies such as learning analytics and educational data mining. However, the adoption of machine learning-driven tools without scrutiny risks perpetuating biases. Despite ongoing efforts to tackle fairness issues, their application to educational datasets

[...] Read more.

Machine learning (ML) has become integral in educational decision-making through technologies such as learning analytics and educational data mining. However, the adoption of machine learning-driven tools without scrutiny risks perpetuating biases. Despite ongoing efforts to tackle fairness issues, their application to educational datasets remains limited. To address the mentioned gap in the literature, this research evaluates the effectiveness of four bias mitigation techniques in an educational dataset aiming at predicting students’ dropout rate. The overarching research question is: “How effective are the techniques of reweighting, resampling, and Reject Option-based Classification (ROC) pivoting in mitigating the predictive bias associated with high school dropout rates in the HSLS:09 dataset?" The effectiveness of these techniques was assessed based on performance metrics including false positive rate (FPR), accuracy, and F1 score. The study focused on the biological sex of students as the protected attribute. The reweighting technique was found to be ineffective, showing results identical to the baseline condition. Both uniform and preferential resampling techniques significantly reduced predictive bias, especially in the FPR metric but at the cost of reduced accuracy and F1 scores. The ROC pivot technique marginally reduced predictive bias while maintaining the original performance of the classifier, emerging as the optimal method for the HSLS:09 dataset. This research extends the understanding of bias mitigation in educational contexts, demonstrating practical applications of various techniques and providing insights for educators and policymakers. By focusing on an educational dataset, it contributes novel insights beyond the commonly studied datasets, highlighting the importance of context-specific approaches in bias mitigation.

Full article

(This article belongs to the Special Issue Real-World Applications of Machine Learning Techniques)

►▼

Show Figures

Figure 1

Open AccessReview

Generative AI, Research Ethics, and Higher Education Research: Insights from a Scientometric Analysis

by

Saba Mansoor Qadhi, Ahmed Alduais, Youmen Chaaban and Majeda Khraisheh

Information 2024, 15(6), 325; https://doi.org/10.3390/info15060325 - 2 Jun 2024

Abstract

In the digital age, the intersection of artificial intelligence (AI) and higher education (HE) poses novel ethical considerations, necessitating a comprehensive exploration of this multifaceted relationship. This study aims to quantify and characterize the current research trends and critically assess the discourse on

[...] Read more.

In the digital age, the intersection of artificial intelligence (AI) and higher education (HE) poses novel ethical considerations, necessitating a comprehensive exploration of this multifaceted relationship. This study aims to quantify and characterize the current research trends and critically assess the discourse on ethical AI applications within HE. Employing a mixed-methods design, we integrated quantitative data from the Web of Science, Scopus, and the Lens databases with qualitative insights from selected studies to perform scientometric and content analyses, yielding a nuanced landscape of AI utilization in HE. Our results identified vital research areas through citation bursts, keyword co-occurrence, and thematic clusters. We provided a conceptual model for ethical AI integration in HE, encapsulating dichotomous perspectives on AI’s role in education. Three thematic clusters were identified: ethical frameworks and policy development, academic integrity and content creation, and student interaction with AI. The study concludes that, while AI offers substantial benefits for educational advancement, it also brings challenges that necessitate vigilant governance to uphold academic integrity and ethical standards. The implications extend to policymakers, educators, and AI developers, highlighting the need for ethical guidelines, AI literacy, and human-centered AI tools.

Full article

(This article belongs to the Special Issue Next-Generation Programming Education: Integrating Generative AI and Collaborative Tools for Cutting-Edge Learning Experiences)

►▼

Show Figures

Figure 1

Open AccessArticle

Integrating Edge-Intelligence in AUV for Real-Time Fish Hotspot Identification and Fish Species Classification

by

U. Sowmmiya, J. Preetha Roselyn and Prabha Sundaravadivel

Information 2024, 15(6), 324; https://doi.org/10.3390/info15060324 - 31 May 2024

Abstract

Enhancing the livelihood environment for fishermen’s communities with the rapid technological growth is essential in the marine sector. Among the various issues in the fishing industry, fishing zone identification and fish catch detection play a significant role in the fishing community. In this

[...] Read more.

Enhancing the livelihood environment for fishermen’s communities with the rapid technological growth is essential in the marine sector. Among the various issues in the fishing industry, fishing zone identification and fish catch detection play a significant role in the fishing community. In this work, the automated prediction of potential fishing zones and classification of fish species in an aquatic environment through machine learning algorithms is developed and implemented. A prototype of the boat structure is designed and developed with lightweight wooden material encompassing all necessary sensors and cameras. The functions of the unmanned boat (FishID-AUV) are based on the user’s control through a user-friendly mobile/web application (APP). The different features impacting the identification of hotspots are considered, and feature selection is performed using various classifier-based learning algorithms, namely, Naive Bayes, Nearest neighbors, Random Forest and Support Vector Machine (SVM). The performance of classifications are compared. From the real-time results, it is clear that the Naive Bayes classification model is found to provide better accuracy, which is employed in the application platform for predicting the potential fishing zone. After identifying the first catch, the species are classified using an AlexNet-based deep Convolutional Neural Network. Also, the user can fetch real-time information such as the status of fishing through live video streaming to determine the quality and quantity of fish along with information like pH, temperature and humidity. The proposed work is implemented in a real-time boat structure prototype and is validated with data from sensors and satellites.

Full article

(This article belongs to the Special Issue Artificial Intelligence on the Edge)

Open AccessArticle

Architectural Framework to Enhance Image-Based Vehicle Positioning for Advanced Functionalities

by

Iosif-Alin Beti, Paul-Corneliu Herghelegiu and Constantin-Florin Caruntu

Information 2024, 15(6), 323; https://doi.org/10.3390/info15060323 - 31 May 2024

Abstract

The growing number of vehicles on the roads has resulted in several challenges, including increased accident rates, fuel consumption, pollution, travel time, and driving stress. However, recent advancements in intelligent vehicle technologies, such as sensors and communication networks, have the potential to revolutionize

[...] Read more.

The growing number of vehicles on the roads has resulted in several challenges, including increased accident rates, fuel consumption, pollution, travel time, and driving stress. However, recent advancements in intelligent vehicle technologies, such as sensors and communication networks, have the potential to revolutionize road traffic and address these challenges. In particular, the concept of platooning for autonomous vehicles, where they travel in groups at high speeds with minimal distances between them, has been proposed to enhance the efficiency of road traffic. To achieve this, it is essential to determine the precise position of vehicles relative to each other. Global positioning system (GPS) devices have an intended positioning error that might increase due to various conditions, e.g., the number of available satellites, nearby buildings, trees, driving into tunnels, etc., making it difficult to compute the exact relative position between two vehicles. To address this challenge, this paper proposes a new architectural framework to improve positioning accuracy using images captured by onboard cameras. It presents a novel algorithm and performance results for vehicle positioning based on GPS and video data. This approach is decentralized, meaning that each vehicle has its own camera and computing unit and communicates with nearby vehicles.

Full article

(This article belongs to the Special Issue Modeling, Design, Analysis and Management of Embedded Control Systems for Automated Driving)

►▼

Show Figures

Figure 1

Open AccessArticle

Prediction of Disk Failure Based on Classification Intensity Resampling

by

Sheng Wu and Jihong Guan

Information 2024, 15(6), 322; https://doi.org/10.3390/info15060322 - 31 May 2024

Abstract

With the rapid growth of the data scale in data centers, the high reliability of storage is facing various challenges. Specifically, hardware failures such as disk faults occur frequently, causing serious system availability issues. In this context, hardware fault prediction based on AI

[...] Read more.

With the rapid growth of the data scale in data centers, the high reliability of storage is facing various challenges. Specifically, hardware failures such as disk faults occur frequently, causing serious system availability issues. In this context, hardware fault prediction based on AI and big data technologies has become a research hotspot, aiming to guide operation and maintenance personnel to implement preventive replacement through accurate prediction to reduce hardware failure rates. However, existing methods still have weaknesses in terms of accuracy due to the impacts of data quality issues such as the sample imbalance. This article proposes a disk fault prediction method based on classification intensity resampling, which fills the gap between the degree of data imbalance and the actual classification intensity of the task by introducing a base classifier to calculate the classification intensity, thus better preserving the data features of the original dataset. In addition, using ensemble learning methods such as random forests, combined with resampling, an integrated classifier for imbalanced data is developed to further improve the prediction accuracy. Experimental verification shows that compared with traditional methods, the F1-score of disk fault prediction is improved by 6%, and the model training time is also greatly reduced. The fault prediction method proposed in this paper has been applied to approximately 80 disk drives and nearly 40,000 disks in the production environment of a large bank’s data center to guide preventive replacements. Compared to traditional methods, the number of preventive replacements based on our method has decreased by approximately 21%, while the overall disk failure rate remains unchanged, thus demonstrating the effectiveness of our method.

Full article

(This article belongs to the Special Issue Machine Learning Approaches for Imbalanced Domains: Emerging Trends and Applications)

►▼

Show Figures

Figure 1

Open AccessArticle

Research on Facial Expression Recognition Algorithm Based on Lightweight Transformer

by

Bin Jiang, Nanxing Li, Xiaomei Cui, Weihua Liu, Zeqi Yu and Yongheng Xie

Information 2024, 15(6), 321; https://doi.org/10.3390/info15060321 - 31 May 2024

Abstract

►▼

Show Figures

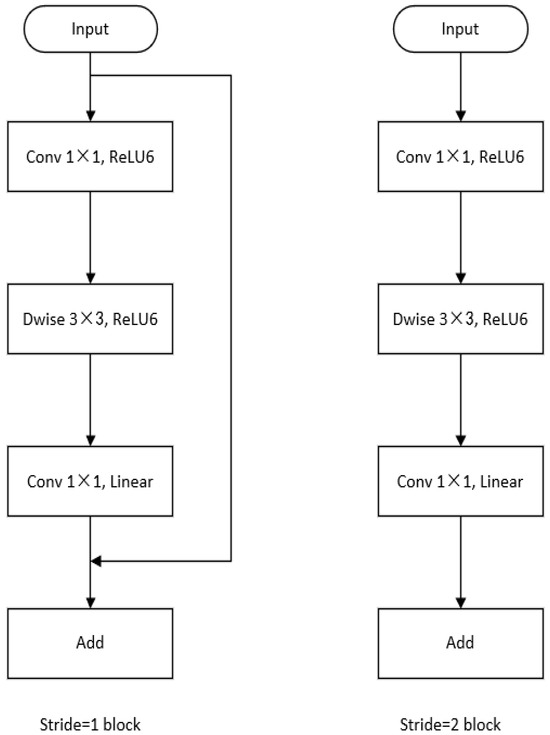

To avoid the overfitting problem of the network model and improve the facial expression recognition effect of partially occluded facial images, an improved facial expression recognition algorithm based on MobileViT has been proposed. Firstly, in order to obtain features that are useful and

[...] Read more.

To avoid the overfitting problem of the network model and improve the facial expression recognition effect of partially occluded facial images, an improved facial expression recognition algorithm based on MobileViT has been proposed. Firstly, in order to obtain features that are useful and richer for experiments, deep convolution operations are added to the inverted residual blocks of this network, thus improving the facial expression recognition rate. Then, in the process of dimension reduction, the activation function can significantly improve the convergence speed of the model, and then quickly reduce the loss error in the training process, as well as to preserve the effective facial expression features as much as possible and reduce the overfitting problem. Experimental results on RaFD, FER2013, and FER2013Plus show that this method has significant advantages over mainstream networks and the network achieves the highest recognition rate.

Full article

Figure 1

Open AccessEditorial

An Editorial for the Special Issue “Pervasive Computing in IoT”

by

Spyros Panagiotakis and Evangelos K. Markakis

Information 2024, 15(6), 320; https://doi.org/10.3390/info15060320 - 30 May 2024

Abstract

In the era of Internet of Things (IoT) we have entered, the “Monitoring–Decision–Execution” cycle of typical autonomic and automation systems is extended, so it includes distributed developments that might scale from a smart home or greenhouse to a smart city and from autonomous

[...] Read more.

In the era of Internet of Things (IoT) we have entered, the “Monitoring–Decision–Execution” cycle of typical autonomic and automation systems is extended, so it includes distributed developments that might scale from a smart home or greenhouse to a smart city and from autonomous driving to emergency management [...]

Full article

(This article belongs to the Special Issue Pervasive Computing in IoT)

Open AccessArticle

A Multimethod Approach for Healthcare Information Sharing Systems: Text Analysis and Empirical Data

by

Amit Malhan, Robert Pavur, Lou E. Pelton and Ava Hajian

Information 2024, 15(6), 319; https://doi.org/10.3390/info15060319 - 29 May 2024

Abstract

This paper provides empirical evidence using two studies to explain the primary factors facilitating electronic health record (EHR) systems adoption through the lens of the resource advantage theory. We aim to address the following research questions: What are the main organizational antecedents of

[...] Read more.

This paper provides empirical evidence using two studies to explain the primary factors facilitating electronic health record (EHR) systems adoption through the lens of the resource advantage theory. We aim to address the following research questions: What are the main organizational antecedents of EHR implementation? What is the role of monitoring in EHR system implementation? What are the current themes and people’s attitudes toward EHR systems? This paper includes two empirical studies. Study 1 presents a research model based on data collected from four different archival datasets. Drawing upon the resource advantage theory, this paper uses archival data from 200 Texas hospitals, thus mitigating potential response bias and enhancing the validity of the findings. Study 2 includes a text analysis of 5154 textual data, sentiment analysis, and topic modeling. Study 1’s findings reveal that joint ventures and ownership are the two main enablers of adopting EHR systems in 200 Texas hospitals. Moreover, the results offer a moderating role of monitoring in strengthening the relationship between joint-venture capability and the implementation of EHR systems. Study 2’s results indicate a positive attitude toward EHR systems. The U.S. was unique in the sample due to its slower adoption of EHR systems than other developed countries. Physician burnout also emerged as a significant concern in the context of EHR adoption. Topic modeling identified three themes: training, healthcare interoperability, and organizational barriers. In a multimethod design, this paper contributes to prior work by offering two new EHR antecedents: hospital ownership and joint-venture capability. Moreover, this paper suggests that the monitoring mechanism moderates the adoption of EHR systems in Texas hospitals. Moreover, this paper contributes to prior EHR works by performing text analysis of textual data to carry out sentiment analysis and topic modeling.

Full article

(This article belongs to the Special Issue Information Systems in Healthcare)

►▼

Show Figures

Figure 1

Open AccessArticle

GRAAL: Graph-Based Retrieval for Collecting Related Passages across Multiple Documents

by

Misael Mongiovì and Aldo Gangemi

Information 2024, 15(6), 318; https://doi.org/10.3390/info15060318 - 29 May 2024

Abstract

Finding passages related to a sentence over a large collection of text documents is a fundamental task for claim verification and open-domain question answering. For instance, a common approach for verifying a claim is to extract short snippets of relevant text from a

[...] Read more.

Finding passages related to a sentence over a large collection of text documents is a fundamental task for claim verification and open-domain question answering. For instance, a common approach for verifying a claim is to extract short snippets of relevant text from a collection of reference documents and provide them as input to a natural language inference machine that determines whether the claim can be deduced or refuted. Available approaches struggle when several pieces of evidence from different documents need to be combined to make an inference, as individual documents often have a low relevance with the input and are therefore excluded. We propose GRAAL (GRAph-based retrievAL), a novel graph-based approach that outlines the relevant evidence as a subgraph of a large graph that summarizes the whole corpus. We assess the validity of this approach by building a large graph that represents co-occurring entity mentions on a corpus of Wikipedia pages and using this graph to identify candidate text relevant to a claim across multiple pages. Our experiments on a subset of FEVER, a popular benchmark, show that the proposed approach is effective in identifying short passages related to a claim from multiple documents.

Full article

(This article belongs to the Special Issue 2nd Edition of Information Retrieval and Social Media Mining)

►▼

Show Figures

Figure 1

Open AccessReview

Reliablity and Security for Fog Computing Systems

by

Egor Shiriaev, Tatiana Ermakova, Ekaterina Bezuglova, Maria A. Lapina and Mikhail Babenko

Information 2024, 15(6), 317; https://doi.org/10.3390/info15060317 - 29 May 2024

Abstract

Fog computing (FC) is a distributed architecture in which computing resources and services are placed on edge devices closer to data sources. This enables more efficient data processing, shorter latency times, and better performance. Fog computing was shown to be a promising solution

[...] Read more.

Fog computing (FC) is a distributed architecture in which computing resources and services are placed on edge devices closer to data sources. This enables more efficient data processing, shorter latency times, and better performance. Fog computing was shown to be a promising solution for addressing the new computing requirements. However, there are still many challenges to overcome to utilize this new computing paradigm, in particular, reliability and security. Following this need, a systematic literature review was conducted to create a list of requirements. As a result, the following four key requirements were formulated: (1) low latency and response times; (2) scalability and resource management; (3) fault tolerance and redundancy; and (4) privacy and security. Low delay and response can be achieved through edge caching, edge real-time analyses and decision making, and mobile edge computing. Scalability and resource management can be enabled by edge federation, virtualization and containerization, and edge resource discovery and orchestration. Fault tolerance and redundancy can be enabled by backup and recovery mechanisms, data replication strategies, and disaster recovery plans, with a residual number system (RNS) being a promising solution. Data security and data privacy are manifested in strong authentication and authorization mechanisms, access control and authorization management, with fully homomorphic encryption (FHE) and the secret sharing system (SSS) being of particular interest.

Full article

(This article belongs to the Special Issue Digital Privacy and Security, 2nd Edition)

►▼

Show Figures

Figure 1

Open AccessArticle

Enhancing Arabic Dialect Detection on Social Media: A Hybrid Model with an Attention Mechanism

by

Wael M. S. Yafooz

Information 2024, 15(6), 316; https://doi.org/10.3390/info15060316 - 28 May 2024

Abstract

Recently, the widespread use of social media and easy access to the Internet have brought about a significant transformation in the type of textual data available on the Web. This change is particularly evident in Arabic language usage, as the growing number of

[...] Read more.

Recently, the widespread use of social media and easy access to the Internet have brought about a significant transformation in the type of textual data available on the Web. This change is particularly evident in Arabic language usage, as the growing number of users from diverse domains has led to a considerable influx of Arabic text in various dialects, each characterized by differences in morphology, syntax, vocabulary, and pronunciation. Consequently, researchers in language recognition and natural language processing have become increasingly interested in identifying Arabic dialects. Numerous methods have been proposed to recognize this informal data, owing to its crucial implications for several applications, such as sentiment analysis, topic modeling, text summarization, and machine translation. However, Arabic dialect identification is a significant challenge due to the vast diversity of the Arabic language in its dialects. This study introduces a novel hybrid machine and deep learning model, incorporating an attention mechanism for detecting and classifying Arabic dialects. Several experiments were conducted using a novel dataset that collected information from user-generated comments from Twitter of Arabic dialects, namely, Egyptian, Gulf, Jordanian, and Yemeni, to evaluate the effectiveness of the proposed model. The dataset comprises 34,905 rows extracted from Twitter, representing an unbalanced data distribution. The data annotation was performed by native speakers proficient in each dialect. The results demonstrate that the proposed model outperforms the performance of long short-term memory, bidirectional long short-term memory, and logistic regression models in dialect classification using different word representations as follows: term frequency-inverse document frequency, Word2Vec, and global vector for word representation.

Full article

(This article belongs to the Special Issue Recent Advances in Social Media Mining and Analysis)

►▼

Show Figures

Figure 1

Open AccessArticle

An Ecosystem for the Provision of Digital Accessibility for People with Special Needs

by

Galina Bogdanova, Todor Todorov, Nikolay Noev, Negoslav Sabev, Neda Chehlarova, Mirena Todorova-Ekmekci and Aleksandar Krastev

Information 2024, 15(6), 315; https://doi.org/10.3390/info15060315 - 28 May 2024

Abstract

Digital technologies occupy an important place in today’s developing world. They are also strongly related to new trends in educational technologies. In this context, the digital accessibility of this new environment for people with various special needs is of particular concern. A novel

[...] Read more.

Digital technologies occupy an important place in today’s developing world. They are also strongly related to new trends in educational technologies. In this context, the digital accessibility of this new environment for people with various special needs is of particular concern. A novel tool for assessment of the technological ecosystem, designed to provide digital accessibility to people with special needs, is described in the paper. The overall structure and the initial test of the system are discussed in the paper. The conceptual framework of the ecosystem and its ontological model are described. Special attention is paid to the accessibility of digital learning and e-learning for people with special needs from a robotic perspective.

Full article

(This article belongs to the Special Issue Accessibility and Inclusion in Education: Enabling Digital Technologies)

►▼

Show Figures

Figure 1

Open AccessArticle

Determinants of Humanities and Social Sciences Students’ Intentions to Use Artificial Intelligence Applications for Academic Purposes

by

Konstantinos Lavidas, Iro Voulgari, Stamatios Papadakis, Stavros Athanassopoulos, Antigoni Anastasiou, Andromachi Filippidi, Vassilis Komis and Nikos Karacapilidis

Information 2024, 15(6), 314; https://doi.org/10.3390/info15060314 - 28 May 2024

Abstract

Recent research emphasizes the importance of Artificial Intelligence applications as supporting tools for students in higher education. Simultaneously, an intensive exchange of views has started in the public debate in the international educational community. However, for a more proper use of these applications,

[...] Read more.

Recent research emphasizes the importance of Artificial Intelligence applications as supporting tools for students in higher education. Simultaneously, an intensive exchange of views has started in the public debate in the international educational community. However, for a more proper use of these applications, it is necessary to investigate the factors that explain their intention and actual use in the future. With the Unified Theory of Acceptance and Use of Technology (UTAUT2) model, this work analyses the factors influencing students’ use and intention to use Artificial Intelligence technology. For this purpose, a sample of 197 Greek students at the School of Humanities and Social Sciences from the University of Patras participated in a survey. The findings highlight that expected performance, habit, and enjoyment of these Artificial Intelligence applications are key determinants influencing teachers’ intentions to use them. Moreover, behavioural intention, habit, and facilitating conditions explain the usage of these Artificial Intelligence applications. This study did not reveal any moderating effects. The limitations, practical implications, and proposed directions for future research based on these results are discussed.

Full article

(This article belongs to the Special Issue Artificial Intelligence and Games Science in Education)

►▼

Show Figures

Figure 1

Open AccessCorrection

Correction: AlJarrah et al. A Context-Aware Android Malware Detection Approach Using Machine Learning. Information 2022, 13, 563

by

Mohammed N. AlJarrah, Qussai M. Yaseen and Ahmad M. Mustafa

Information 2024, 15(6), 313; https://doi.org/10.3390/info15060313 - 28 May 2024

Abstract

In the published article [...]

Full article

Open AccessArticle

Enhancing Personalized Recommendations: A Study on the Efficacy of Multi-Task Learning and Feature Integration

by

Qinyong Wang, Enman Jin, Huizhong Zhang, Yumeng Chen, Yinggao Yue, Danilo B. Dorado, Zhongyi Hu and Minghai Xu

Information 2024, 15(6), 312; https://doi.org/10.3390/info15060312 - 27 May 2024

Abstract

►▼

Show Figures

Personalized recommender systems play a crucial role in assisting users in discovering items of interest from vast amounts of information across various domains. However, developing accurate personalized recommender systems remains challenging due to the need to balance model architectures, input feature combinations, and

[...] Read more.

Personalized recommender systems play a crucial role in assisting users in discovering items of interest from vast amounts of information across various domains. However, developing accurate personalized recommender systems remains challenging due to the need to balance model architectures, input feature combinations, and fusion of heterogeneous data sources. This study investigates the impacts of these factors on recommendation performance using the MovieLens and Book Recommendation datasets. Six models, including single-task neural networks, multi-task learning, and baselines, were evaluated with various input feature combinations using Root Mean Squared Error (RMSE) and Mean Absolute Error (MAE). The multi-task learning approach achieved significantly lower RMSE and MAE by effectively leveraging heterogeneous data sources for personalized recommendations through a shared neural network architecture. Furthermore, incorporating user data and content data progressively enhanced performance compared to using only item identifiers. The findings highlight the importance of advanced model architectures and fusing heterogeneous data sources for high-quality recommendations, providing valuable insights for designing effective recommender systems across diverse domains.

Full article

Figure 1

Open AccessArticle

Harnessing Artificial Intelligence for Automated Diagnosis

by

Christos B. Zachariadis and Helen C. Leligou

Information 2024, 15(6), 311; https://doi.org/10.3390/info15060311 - 27 May 2024

Abstract

The evolving role of artificial intelligence (AI) in healthcare can shift the route of automated, supervised and computer-aided diagnostic radiology. An extensive literature review was conducted to consider the potential of designing a fully automated, complete diagnostic platform capable of integrating the current

[...] Read more.

The evolving role of artificial intelligence (AI) in healthcare can shift the route of automated, supervised and computer-aided diagnostic radiology. An extensive literature review was conducted to consider the potential of designing a fully automated, complete diagnostic platform capable of integrating the current medical imaging technologies. Adjuvant, targeted, non-systematic research was regarded as necessary, especially to the end-user medical expert, for the completeness, understanding and terminological clarity of this discussion article that focuses on giving a representative and inclusive idea of the evolutional strides that have taken place, not including an AI architecture technical evaluation. Recent developments in AI applications for assessing various organ systems, as well as enhancing oncology and histopathology, show significant impact on medical practice. Published research outcomes of AI picture segmentation and classification algorithms exhibit promising accuracy, sensitivity and specificity. Progress in this field has led to the introduction of the concept of explainable AI, which ensures transparency of deep learning architectures, enabling human involvement in clinical decision making, especially in critical healthcare scenarios. Structure and language standardization of medical reports, along with interdisciplinary collaboration between medical and technical experts, are crucial for research coordination. Patient personal data should always be handled with confidentiality and dignity, while ensuring legality in the attribution of responsibility, particularly in view of machines lacking empathy and self-awareness. The results of our literature research demonstrate the strong potential of utilizing AI architectures, mainly convolutional neural networks, in medical imaging diagnostics, even though a complete automated diagnostic platform, enabling full body scanning, has not yet been presented.

Full article

(This article belongs to the Special Issue Real-World Applications of Machine Learning Techniques)

►▼

Show Figures

Figure 1

Open AccessArticle

In-Browser Implementation of a Gamification Rule Definition Language Interpreter

by

Jakub Swacha and Wiktor Przetacznik

Information 2024, 15(6), 310; https://doi.org/10.3390/info15060310 - 27 May 2024

Abstract

One of the practical obstacles limiting the use of cloud-based gamification applications is the lack of an Internet connection of adequate quality. In this paper, we describe a practical solution to this problem by the implementation of client-side gamification rule processing so that

[...] Read more.

One of the practical obstacles limiting the use of cloud-based gamification applications is the lack of an Internet connection of adequate quality. In this paper, we describe a practical solution to this problem by the implementation of client-side gamification rule processing so that most events generated by players can be processed without the need to involve server-side functions; therefore, only a handful of data have to be transmitted to the server for global state synchronization, and only when an Internet connection is available. For this purpose, we adopt a simple textual gamification rule definition format, implement the rule parser and event processor, and evaluate the solution in terms of performance in experimental conditions. The obtained results are optimistic, showing that the developed solution can easily handle rule sets and event streams of realistic sizes. The solution is planned to be integrated into the next version of the FGPE gamified programming education platform.

Full article

(This article belongs to the Special Issue Cloud Gamification 2023)

►▼

Show Figures

Figure 1

Open AccessArticle

A Collaborative Allocation Algorithm of Communicating, Caching and Computing Resources in Local Power Wireless Communication Network

by

Jiajia Tang, Sujie Shao, Shaoyong Guo, Ye Wang and Shuang Wu

Information 2024, 15(6), 309; https://doi.org/10.3390/info15060309 - 27 May 2024

Abstract

With the rapid development of new power systems, diverse new power services have imposed stricter requirements on network resources and performance. However, the traditional method of transmitting request data to the IoT management platform for unified processing suffers from large delays due to

[...] Read more.

With the rapid development of new power systems, diverse new power services have imposed stricter requirements on network resources and performance. However, the traditional method of transmitting request data to the IoT management platform for unified processing suffers from large delays due to long transmission distances, making it difficult to meet the delay requirements of new power services. Therefore, to reduce the transmission delay, data transmission, storage and computation need to be performed locally. However, due to the limited resources of individual nodes in the local power wireless communication network, issues such as tight coupling between devices and resources and a lack of flexible allocation need to be addressed. The collaborative allocation of resources among multiple nodes in the local network is necessary to satisfy the multi-dimensional resource requirements of new power services. In response to the problems of limited node resources, inflexible resource allocation, and the high complexity of multi-dimensional resource allocation in local power wireless communication networks, this paper proposes a multi-objective joint optimization model for the collaborative allocation of communication, storage, and computing resources. This model utilizes the computational characteristics of communication resources to reduce the dimensionality of the objective function. Furthermore, a mouse swarm optimization algorithm based on multi-strategy improvements is proposed. The simulation results demonstrate that this method can effectively reduce the total system delay and improve the utilization of network resources.

Full article

(This article belongs to the Special Issue Internet of Things and Cloud-Fog-Edge Computing)

►▼

Show Figures

Figure 1

Open AccessArticle

Privacy and Security Mechanisms for B2B Data Sharing: A Conceptual Framework

by

Wanying Li, Woon Kwan Tse and Jiaqi Chen

Information 2024, 15(6), 308; https://doi.org/10.3390/info15060308 - 26 May 2024

Abstract

In the age of digitalization, business-to-business (B2B) data sharing is becoming increasingly important, enabling organizations to collaborate and make informed decisions as well as simplifying operations and hopefully creating a cost-effective virtual value chain. This is crucial to the success of modern businesses,

[...] Read more.

In the age of digitalization, business-to-business (B2B) data sharing is becoming increasingly important, enabling organizations to collaborate and make informed decisions as well as simplifying operations and hopefully creating a cost-effective virtual value chain. This is crucial to the success of modern businesses, especially global business. However, this approach also comes with significant privacy and security challenges, thus requiring robust mechanisms to protect sensitive information. After analyzing the evolving status of B2B data sharing, the purpose of this study is to provide insights into the design of theoretical framework solutions for the field. This study adopts technologies including encryption, access control, data anonymization, and audit trails, with the common goal of striking a balance between facilitating data sharing and protecting data confidentiality as well as data integrity. In addition, emerging technologies such as homomorphic encryption, blockchain, and their applicability as well as advantages in the B2B data sharing environment are explored. The results of this study offer a new approach to managing complex data sharing between organizations, providing a strategic mix of traditional and innovative solutions to promote secure and efficient digital collaboration.

Full article

(This article belongs to the Special Issue Digital Privacy and Security, 2nd Edition)

►▼

Show Figures

Figure 1

Journal Menu

► ▼ Journal Menu-

- Information Home

- Aims & Scope

- Editorial Board

- Reviewer Board

- Topical Advisory Panel

- Instructions for Authors

- Special Issues

- Topics

- Sections & Collections

- Article Processing Charge

- Indexing & Archiving

- Editor’s Choice Articles

- Most Cited & Viewed

- Journal Statistics

- Journal History

- Journal Awards

- Society Collaborations

- Conferences

- Editorial Office

Journal Browser

► ▼ Journal BrowserHighly Accessed Articles

Latest Books

E-Mail Alert

News

Topics

Topic in

Drones, Electronics, Future Internet, Information, Mathematics

Future Internet Architecture: Difficulties and Opportunities

Topic Editors: Peiying Zhang, Haotong Cao, Keping YuDeadline: 30 June 2024

Topic in

Algorithms, Computation, Information, Mathematics

Complex Networks and Social Networks

Topic Editors: Jie Meng, Xiaowei Huang, Minghui Qian, Zhixuan XuDeadline: 31 July 2024

Topic in

Algorithms, Future Internet, Information, Mathematics, Symmetry

Research on Data Mining of Electronic Health Records Using Deep Learning Methods

Topic Editors: Dawei Yang, Yu Zhu, Hongyi XinDeadline: 31 August 2024

Topic in

Biomedicines, Computers, Information, IJERPH, JPM

eHealth and mHealth: Challenges and Prospects, 2nd Volume

Topic Editors: Antonis Billis, Manuel Dominguez-Morales, Anton CivitDeadline: 30 September 2024

Conferences

Special Issues

Special Issue in

Information

Text Mining: Challenges, Algorithms, Tools and Applications

Guest Editor: Fei LiuDeadline: 15 June 2024

Special Issue in

Information

Systems Engineering and Knowledge Management

Guest Editor: Vladimír BurešDeadline: 30 June 2024

Special Issue in

Information

New Generation of Intelligent Transit Systems: Theory and Applications

Guest Editor: Antonio ComiDeadline: 15 July 2024

Special Issue in

Information

Artificial Intelligence on the Edge

Guest Editors: Lorenzo Carnevale, Massimo VillariDeadline: 31 July 2024

Topical Collections

Topical Collection in

Information

Natural Language Processing and Applications: Challenges and Perspectives

Collection Editor: Diego Reforgiato Recupero

Topical Collection in

Information

Knowledge Graphs for Search and Recommendation

Collection Editors: Pierpaolo Basile, Annalina Caputo

Topical Collection in

Information

Augmented Reality Technologies, Systems and Applications

Collection Editors: Ramon Fabregat, Jorge Bacca-Acosta, N.D. Duque-Mendez