Journal Description

Big Data and Cognitive Computing

Big Data and Cognitive Computing

is an international, peer-reviewed, open access journal on big data and cognitive computing published monthly online by MDPI.

- Open Access— free for readers, with article processing charges (APC) paid by authors or their institutions.

- High Visibility: indexed within Scopus, ESCI (Web of Science), dblp, Inspec, Ei Compendex, and other databases.

- Journal Rank: CiteScore - Q1 (Management Information Systems)

- Rapid Publication: manuscripts are peer-reviewed and a first decision is provided to authors approximately 18.2 days after submission; acceptance to publication is undertaken in 3.9 days (median values for papers published in this journal in the second half of 2023).

- Recognition of Reviewers: reviewers who provide timely, thorough peer-review reports receive vouchers entitling them to a discount on the APC of their next publication in any MDPI journal, in appreciation of the work done.

Impact Factor:

3.7 (2022)

Latest Articles

Dynamic Electrocardiogram Signal Quality Assessment Method Based on Convolutional Neural Network and Long Short-Term Memory Network

Big Data Cogn. Comput. 2024, 8(6), 57; https://doi.org/10.3390/bdcc8060057 - 31 May 2024

Abstract

►

Show Figures

Cardiovascular diseases (CVDs) are highly prevalent, sudden onset, and relatively fatal, posing a significant public health burden. Long-term dynamic electrocardiography, which can continuously record the long-term dynamic ECG activities of individuals in their daily lives, has high research value. However, ECG signals are

[...] Read more.

Cardiovascular diseases (CVDs) are highly prevalent, sudden onset, and relatively fatal, posing a significant public health burden. Long-term dynamic electrocardiography, which can continuously record the long-term dynamic ECG activities of individuals in their daily lives, has high research value. However, ECG signals are weak and highly susceptible to external interference, which may lead to false alarms and misdiagnosis, affecting the diagnostic efficiency and the utilization rate of healthcare resources, so research on the quality of dynamic ECG signals is extremely necessary. Aimed at the above problems, this paper proposes a dynamic ECG signal quality assessment method based on CNN and LSTM that divides the signal into three quality categories: the signal of the Q1 category has a lower noise level, which can be used for reliable diagnosis of arrhythmia, etc.; the signal of the Q2 category has a higher noise level, but it still contains information that can be used for heart rate calculation, HRV analysis, etc.; and the signal of the Q3 category has a higher noise level that can interfere with the diagnosis of cardiovascular disease and should be discarded or labeled. In this paper, we use the widely recognized MIT-BIH database, based on which the model is applied to realistically collect exercise experimental data to assess the performance of the model in dealing with real-world situations. The model achieves an accuracy of 98.65% on the test set, a macro-averaged F1 score of 98.5%, and a high F1 score of 99.71% for the prediction of Q3 category signals, which shows that the model has good accuracy and generalization performance.

Full article

Open AccessArticle

Stock Trend Prediction with Machine Learning: Incorporating Inter-Stock Correlation Information through Laplacian Matrix

by

Wenxuan Zhang and Benzhuo Lu

Big Data Cogn. Comput. 2024, 8(6), 56; https://doi.org/10.3390/bdcc8060056 - 30 May 2024

Abstract

Predicting stock trends in financial markets is of significant importance to investors and portfolio managers. In addition to a stock’s historical price information, the correlation between that stock and others can also provide valuable information for forecasting future returns. Existing methods often fall

[...] Read more.

Predicting stock trends in financial markets is of significant importance to investors and portfolio managers. In addition to a stock’s historical price information, the correlation between that stock and others can also provide valuable information for forecasting future returns. Existing methods often fall short of straightforward and effective capture of the intricate interdependencies between stocks. In this research, we introduce the concept of a Laplacian correlation graph (LOG), designed to explicitly model the correlations in stock price changes as the edges of a graph. After constructing the LOG, we will build a machine learning model, such as a graph attention network (GAT), and incorporate the LOG into the loss term. This innovative loss term is designed to empower the neural network to learn and leverage price correlations among different stocks in a straightforward but effective manner. The advantage of a Laplacian matrix is that matrix operation form is more suitable for current machine learning frameworks, thus achieving high computational efficiency and simpler model representation. Experimental results demonstrate improvements across multiple evaluation metrics using our LOG. Incorporating our LOG into five base machine learning models consistently enhances their predictive performance. Furthermore, backtesting results reveal superior returns and information ratios, underscoring the practical implications of our approach for real-world investment decisions. Our study addresses the limitations of existing methods that miss the correlation between stocks or fail to model correlation in a simple and effective way, and the proposed LOG emerges as a promising tool for stock returns prediction, offering enhanced predictive accuracy and improved investment outcomes.

Full article

(This article belongs to the Special Issue Big Data Analytics and Edge Computing: Recent Trends and Future)

►▼

Show Figures

Figure 1

Open AccessArticle

A Secure Data Publishing and Access Service for Sensitive Data from Living Labs: Enabling Collaboration with External Researchers via Shareable Data

by

Mikel Hernandez, Evdokimos Konstantinidis, Gorka Epelde, Francisco Londoño, Despoina Petsani, Michalis Timoleon, Vasiliki Fiska, Lampros Mpaltadoros, Christoniki Maga-Nteve, Ilias Machairas and Panagiotis D. Bamidis

Big Data Cogn. Comput. 2024, 8(6), 55; https://doi.org/10.3390/bdcc8060055 - 28 May 2024

Abstract

Intending to enable a broader collaboration with the scientific community while maintaining privacy of the data stored and generated in Living Labs, this paper presents the Shareable Data Publishing and Access Service for Living Labs, implemented within the framework of the H2020 VITALISE

[...] Read more.

Intending to enable a broader collaboration with the scientific community while maintaining privacy of the data stored and generated in Living Labs, this paper presents the Shareable Data Publishing and Access Service for Living Labs, implemented within the framework of the H2020 VITALISE project. Building upon previous work, significant enhancements and improvements are presented in the architecture enabling Living Labs to securely publish collected data in an internal and isolated node for external use. External researchers can access a portal to discover and download shareable data versions (anonymised or synthetic data) derived from the data stored across different Living Labs that they can use to develop, test, and debug their processing scripts locally, adhering to legal and ethical data handling practices. Subsequently, they may request remote execution of the same algorithms against the real internal data in Living Lab nodes, comparing the outcomes with those obtained using shareable data. The paper details the architecture, data flows, technical details and validation of the service with real-world usage examples, demonstrating its efficacy in promoting data-driven research in digital health while preserving privacy. The presented service can be used as an intermediary between Living Labs and external researchers for secure data exchange and to accelerate research on data analytics paradigms in digital health, ensuring compliance with data protection laws.

Full article

(This article belongs to the Special Issue Privacy-Enhancing Technologies of Data for Sustainable and Secure Cooperation)

►▼

Show Figures

Figure 1

Open AccessArticle

Analyzing the Attractiveness of Food Images Using an Ensemble of Deep Learning Models Trained via Social Media Images

by

Tanyaboon Morinaga, Karn Patanukhom and Yuthapong Somchit

Big Data Cogn. Comput. 2024, 8(6), 54; https://doi.org/10.3390/bdcc8060054 - 27 May 2024

Abstract

With the growth of digital media and social networks, sharing visual content has become common in people’s daily lives. In the food industry, visually appealing food images can attract attention, drive engagement, and influence consumer behavior. Therefore, it is crucial for businesses to

[...] Read more.

With the growth of digital media and social networks, sharing visual content has become common in people’s daily lives. In the food industry, visually appealing food images can attract attention, drive engagement, and influence consumer behavior. Therefore, it is crucial for businesses to understand what constitutes attractive food images. Assessing the attractiveness of food images poses significant challenges due to the lack of large labeled datasets that align with diverse public preferences. Additionally, it is challenging for computer assessments to approach human judgment in evaluating aesthetic quality. This paper presents a novel framework that circumvents the need for explicit human annotation by leveraging user engagement data that are readily available on social media platforms. We propose procedures to collect, filter, and automatically label the attractiveness classes of food images based on their user engagement levels. The data gathered from social media are used to create predictive models for category-specific attractiveness assessments. Our experiments across five food categories demonstrate the efficiency of our approach. The experimental results show that our proposed user-engagement-based attractiveness class labeling achieves a high consistency of 97.2% compared to human judgments obtained through A/B testing. Separate attractiveness assessment models were created for each food category using convolutional neural networks (CNNs). When analyzing unseen food images, our models achieve a consistency of 76.0% compared to human judgments. The experimental results suggest that the food image dataset collected from social networks, using the proposed framework, can be successfully utilized for learning food attractiveness assessment models.

Full article

(This article belongs to the Special Issue Advances and Applications of Deep Learning Methods and Image Processing)

►▼

Show Figures

Figure 1

Open AccessArticle

Exploiting Rating Prediction Certainty for Recommendation Formulation in Collaborative Filtering

by

Dionisis Margaris, Kiriakos Sgardelis, Dimitris Spiliotopoulos and Costas Vassilakis

Big Data Cogn. Comput. 2024, 8(6), 53; https://doi.org/10.3390/bdcc8060053 - 27 May 2024

Abstract

Collaborative filtering is a popular recommender system (RecSys) method that produces rating prediction values for products by combining the ratings that close users have already given to the same products. Afterwards, the products that achieve the highest prediction values are recommended to the

[...] Read more.

Collaborative filtering is a popular recommender system (RecSys) method that produces rating prediction values for products by combining the ratings that close users have already given to the same products. Afterwards, the products that achieve the highest prediction values are recommended to the user. However, as expected, prediction estimation may contain errors, which, in the case of RecSys, will lead to either not recommending a product that the user would actually like (i.e., purchase, watch, or listen) or to recommending a product that the user would not like, with both cases leading to degraded recommendation quality. Especially in the latter case, the RecSys would be deemed unreliable. In this work, we design and develop a recommendation algorithm that considers both the rating prediction values and the prediction confidence, derived from features associated with rating prediction accuracy in collaborative filtering. The presented algorithm is based on the rationale that it is preferable to recommend an item with a slightly lower prediction value, if that prediction seems to be certain and safe, over another that has a higher value but of lower certainty. The proposed algorithm prevents low-confidence rating predictions from being included in recommendations, ensuring the recommendation quality and reliability of the RecSys.

Full article

(This article belongs to the Special Issue Business Intelligence and Big Data in E-commerce)

►▼

Show Figures

Figure 1

Open AccessArticle

Image-Based Leaf Disease Recognition Using Transfer Deep Learning with a Novel Versatile Optimization Module

by

Petra Radočaj, Dorijan Radočaj and Goran Martinović

Big Data Cogn. Comput. 2024, 8(6), 52; https://doi.org/10.3390/bdcc8060052 - 23 May 2024

Abstract

Due to the projected increase in food production by 70% in 2050, crops should be additionally protected from diseases and pests to ensure a sufficient food supply. Transfer deep learning approaches provide a more efficient solution than traditional methods, which are labor-intensive and

[...] Read more.

Due to the projected increase in food production by 70% in 2050, crops should be additionally protected from diseases and pests to ensure a sufficient food supply. Transfer deep learning approaches provide a more efficient solution than traditional methods, which are labor-intensive and struggle to effectively monitor large areas, leading to delayed disease detection. This study proposed a versatile module based on the Inception module, Mish activation function, and Batch normalization (IncMB) as a part of deep neural networks. A convolutional neural network (CNN) with transfer learning was used as the base for evaluated approaches for tomato disease detection: (1) CNNs, (2) CNNs with a support vector machine (SVM), and (3) CNNs with the proposed IncMB module. In the experiment, the public dataset PlantVillage was used, containing images of six different tomato leaf diseases. The best results were achieved by the pre-trained InceptionV3 network, which contains an IncMB module with an accuracy of 97.78%. In three out of four cases, the highest accuracy was achieved by networks containing the proposed IncMB module in comparison to evaluated CNNs. The proposed IncMB module represented an improvement in the early detection of plant diseases, providing a basis for timely leaf disease detection.

Full article

(This article belongs to the Topic Big Data and Artificial Intelligence, 2nd Volume)

►▼

Show Figures

Figure 1

Open AccessArticle

Development of Context-Based Sentiment Classification for Intelligent Stock Market Prediction

by

Nurmaganbet Smatov, Ruslan Kalashnikov and Amandyk Kartbayev

Big Data Cogn. Comput. 2024, 8(6), 51; https://doi.org/10.3390/bdcc8060051 - 22 May 2024

Abstract

This paper presents a novel approach to sentiment analysis specifically customized for predicting stock market movements, bypassing the need for external dictionaries that are often unavailable for many languages. Our methodology directly analyzes textual data, with a particular focus on context-specific sentiment words

[...] Read more.

This paper presents a novel approach to sentiment analysis specifically customized for predicting stock market movements, bypassing the need for external dictionaries that are often unavailable for many languages. Our methodology directly analyzes textual data, with a particular focus on context-specific sentiment words within neural network models. This specificity ensures that our sentiment analysis is both relevant and accurate in identifying trends in the stock market. We employ sophisticated mathematical modeling techniques to enhance both the precision and interpretability of our models. Through meticulous data handling and advanced machine learning methods, we leverage large datasets from Twitter and financial markets to examine the impact of social media sentiment on financial trends. We achieved an accuracy exceeding 75%, highlighting the effectiveness of our modeling approach, which we further refined into a convolutional neural network model. This achievement contributes valuable insights into sentiment analysis within the financial domain, thereby improving the overall clarity of forecasting in this field.

Full article

(This article belongs to the Special Issue Advances in Natural Language Processing and Text Mining)

►▼

Show Figures

Figure 1

Open AccessArticle

XplAInable: Explainable AI Smoke Detection at the Edge

by

Alexander Lehnert, Falko Gawantka, Jonas During, Franz Just and Marc Reichenbach

Big Data Cogn. Comput. 2024, 8(5), 50; https://doi.org/10.3390/bdcc8050050 - 17 May 2024

Abstract

Wild and forest fires pose a threat to forests and thereby, in extension, to wild life and humanity. Recent history shows an increase in devastating damages caused by fires. Traditional fire detection systems, such as video surveillance, fail in the early stages of

[...] Read more.

Wild and forest fires pose a threat to forests and thereby, in extension, to wild life and humanity. Recent history shows an increase in devastating damages caused by fires. Traditional fire detection systems, such as video surveillance, fail in the early stages of a rural forest fire. Such systems would see the fire only when the damage is immense. Novel low-power smoke detection units based on gas sensors can detect smoke fumes in the early development stages of fires. The required proximity is only achieved using a distributed network of sensors interconnected via 5G. In the context of battery-powered sensor nodes, energy efficiency becomes a key metric. Using AI classification combined with XAI enables improved confidence regarding measurements. In this work, we present both a low-power gas sensor for smoke detection and a system elaboration regarding energy-efficient communication schemes and XAI-based evaluation. We show that leveraging edge processing in a smart way combined with buffered data samples in a 5G communication network yields optimal energy efficiency and rating results.

Full article

(This article belongs to the Special Issue Low-Power Data Processing on the Edge: Solutions for Artificial Intelligence Hardware Acceleration)

►▼

Show Figures

Figure 1

Open AccessArticle

Runtime Verification-Based Safe MARL for Optimized Safety Policy Generation for Multi-Robot Systems

by

Yang Liu and Jiankun Li

Big Data Cogn. Comput. 2024, 8(5), 49; https://doi.org/10.3390/bdcc8050049 - 16 May 2024

Abstract

The intelligent warehouse is a modern logistics management system that uses technologies like the Internet of Things, robots, and artificial intelligence to realize automated management and optimize warehousing operations. The multi-robot system (MRS) is an important carrier for implementing an intelligent warehouse, which

[...] Read more.

The intelligent warehouse is a modern logistics management system that uses technologies like the Internet of Things, robots, and artificial intelligence to realize automated management and optimize warehousing operations. The multi-robot system (MRS) is an important carrier for implementing an intelligent warehouse, which completes various tasks in the warehouse through cooperation and coordination between robots. As an extension of reinforcement learning and a kind of swarm intelligence, MARL (multi-agent reinforcement learning) can effectively create the multi-robot systems in intelligent warehouses. However, MARL-based multi-robot systems in intelligent warehouses face serious safety issues, such as collisions, conflicts, and congestion. To deal with these issues, this paper proposes a safe MARL method based on runtime verification, i.e., an optimized safety policy-generation framework, for multi-robot systems in intelligent warehouses. The framework consists of three stages. In the first stage, a runtime model SCMG (safety-constrained Markov Game) is defined for the multi-robot system at runtime in the intelligent warehouse. In the second stage, rPATL (probabilistic alternating-time temporal logic with rewards) is used to express safety properties, and SCMG is cyclically verified and refined through runtime verification (RV) to ensure safety. This stage guarantees the safety of robots’ behaviors before training. In the third stage, the verified SCMG guides SCPO (safety-constrained policy optimization) to obtain an optimized safety policy for robots. Finally, a multi-robot warehouse (RWARE) scenario is used for experimental evaluation. The results show that the policy obtained by our framework is safer than existing frameworks and includes a certain degree of optimization.

Full article

(This article belongs to the Special Issue Field Robotics and Artificial Intelligence (AI))

►▼

Show Figures

Figure 1

Open AccessArticle

Enhanced Linear and Vision Transformer-Based Architectures for Time Series Forecasting

by

Musleh Alharthi and Ausif Mahmood

Big Data Cogn. Comput. 2024, 8(5), 48; https://doi.org/10.3390/bdcc8050048 - 16 May 2024

Abstract

►▼

Show Figures

Time series forecasting has been a challenging area in the field of Artificial Intelligence. Various approaches such as linear neural networks, recurrent linear neural networks, Convolutional Neural Networks, and recently transformers have been attempted for the time series forecasting domain. Although transformer-based architectures

[...] Read more.

Time series forecasting has been a challenging area in the field of Artificial Intelligence. Various approaches such as linear neural networks, recurrent linear neural networks, Convolutional Neural Networks, and recently transformers have been attempted for the time series forecasting domain. Although transformer-based architectures have been outstanding in the Natural Language Processing domain, especially in autoregressive language modeling, the initial attempts to use transformers in the time series arena have met mixed success. A recent important work indicating simple linear networks outperform transformer-based designs. We investigate this paradox in detail comparing the linear neural network- and transformer-based designs, providing insights into why a certain approach may be better for a particular type of problem. We also improve upon the recently proposed simple linear neural network-based architecture by using dual pipelines with batch normalization and reversible instance normalization. Our enhanced architecture outperforms all existing architectures for time series forecasting on a majority of the popular benchmarks.

Full article

Figure 1

Open AccessArticle

International Classification of Diseases Prediction from MIMIIC-III Clinical Text Using Pre-Trained ClinicalBERT and NLP Deep Learning Models Achieving State of the Art

by

Ilyas Aden, Christopher H. T. Child and Constantino Carlos Reyes-Aldasoro

Big Data Cogn. Comput. 2024, 8(5), 47; https://doi.org/10.3390/bdcc8050047 - 10 May 2024

Abstract

The International Classification of Diseases (ICD) serves as a widely employed framework for assigning diagnosis codes to electronic health records of patients. These codes facilitate the encapsulation of diagnoses and procedures conducted during a patient’s hospitalisation. This study aims to devise a predictive

[...] Read more.

The International Classification of Diseases (ICD) serves as a widely employed framework for assigning diagnosis codes to electronic health records of patients. These codes facilitate the encapsulation of diagnoses and procedures conducted during a patient’s hospitalisation. This study aims to devise a predictive model for ICD codes based on the MIMIC-III clinical text dataset. Leveraging natural language processing techniques and deep learning architectures, we constructed a pipeline to distill pertinent information from the MIMIC-III dataset: the Medical Information Mart for Intensive Care III (MIMIC-III), a sizable, de-identified, and publicly accessible repository of medical records. Our method entails predicting diagnosis codes from unstructured data, such as discharge summaries and notes encompassing symptoms. We used state-of-the-art deep learning algorithms, such as recurrent neural networks (RNNs), long short-term memory (LSTM) networks, bidirectional LSTM (BiLSTM) and BERT models after tokenizing the clinical test with Bio-ClinicalBERT, a pre-trained model from Hugging Face. To evaluate the efficacy of our approach, we conducted experiments utilizing the discharge dataset within MIMIC-III. Employing the BERT model, our methodology exhibited commendable accuracy in predicting the top 10 and top 50 diagnosis codes within the MIMIC-III dataset, achieving average accuracies of 88% and 80%, respectively. In comparison to recent studies by Biseda and Kerang, as well as Gangavarapu, which reported F1 scores of 0.72 in predicting the top 10 ICD-10 codes, our model demonstrated better performance, with an F1 score of 0.87. Similarly, in predicting the top 50 ICD-10 codes, previous research achieved an F1 score of 0.75, whereas our method attained an F1 score of 0.81. These results underscore the better performance of deep learning models over conventional machine learning approaches in this domain, thus validating our findings. The ability to predict diagnoses early from clinical notes holds promise in assisting doctors or physicians in determining effective treatments, thereby reshaping the conventional paradigm of diagnosis-then-treatment care. Our code is available online.

Full article

(This article belongs to the Special Issue Artificial Intelligence and Natural Language Processing)

►▼

Show Figures

Figure 1

Open AccessArticle

Imagine and Imitate: Cost-Effective Bidding under Partially Observable Price Landscapes

by

Xiaotong Luo, Yongjian Chen, Shengda Zhuo, Jie Lu, Ziyang Chen, Lichun Li, Jingyan Tian, Xiaotong Ye and Yin Tang

Big Data Cogn. Comput. 2024, 8(5), 46; https://doi.org/10.3390/bdcc8050046 - 28 Apr 2024

Abstract

Real-time bidding has become a major means for online advertisement exchange. The goal of a real-time bidding strategy is to maximize the benefits for stakeholders, e.g., click-through rates or conversion rates. However, in practise, the optimal bidding strategy for real-time bidding is constrained

[...] Read more.

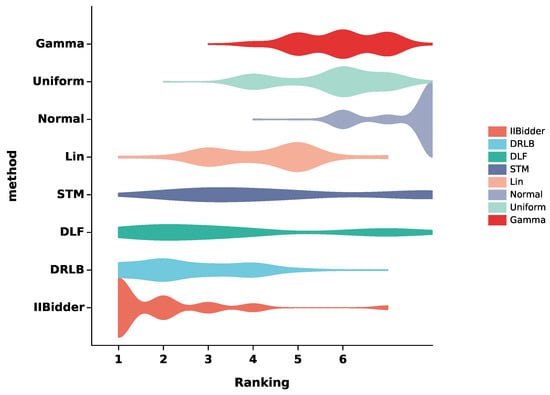

Real-time bidding has become a major means for online advertisement exchange. The goal of a real-time bidding strategy is to maximize the benefits for stakeholders, e.g., click-through rates or conversion rates. However, in practise, the optimal bidding strategy for real-time bidding is constrained by at least three aspects: cost-effectiveness, the dynamic nature of market prices, and the issue of missing bidding values. To address these challenges, we propose Imagine and Imitate Bidding (IIBidder), which includes Strategy Imitation and Imagination modules, to generate cost-effective bidding strategies under partially observable price landscapes. Experimental results on the iPinYou and YOYI datasets demonstrate that IIBidder reduces investment costs, optimizes bidding strategies, and improves future market price predictions.

Full article

(This article belongs to the Special Issue Business Intelligence and Big Data in E-commerce)

►▼

Show Figures

Figure 1

Open AccessReview

Digital Twins for Discrete Manufacturing Lines: A Review

by

Xianqun Feng and Jiafu Wan

Big Data Cogn. Comput. 2024, 8(5), 45; https://doi.org/10.3390/bdcc8050045 - 26 Apr 2024

Abstract

►▼

Show Figures

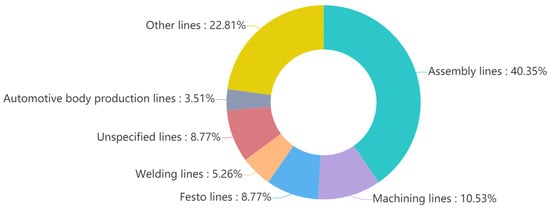

Along with the development of new-generation information technology, digital twins (DTs) have become the most promising enabling technology for smart manufacturing. This article presents a statistical analysis of the literature related to the applications of DTs for discrete manufacturing lines, researches their development

[...] Read more.

Along with the development of new-generation information technology, digital twins (DTs) have become the most promising enabling technology for smart manufacturing. This article presents a statistical analysis of the literature related to the applications of DTs for discrete manufacturing lines, researches their development status in the areas of the design and improvement of manufacturing lines, the scheduling and control of manufacturing line, and predicting faults in critical equipment. The deployment frameworks of DTs in different applications are summarized. In addition, this article discusses the three key technologies of high-fidelity modeling, real-time information interaction methods, and iterative optimization algorithms. The current issues, such as fine-grained sculpting of twin models, the adaptivity of the models, delay issues, and the development of efficient modeling tools are raised. This study provides a reference for the design, modification, and optimization of discrete manufacturing lines.

Full article

Figure 1

Open AccessArticle

Topic Modelling: Going beyond Token Outputs

by

Lowri Williams, Eirini Anthi, Laura Arman and Pete Burnap

Big Data Cogn. Comput. 2024, 8(5), 44; https://doi.org/10.3390/bdcc8050044 - 25 Apr 2024

Abstract

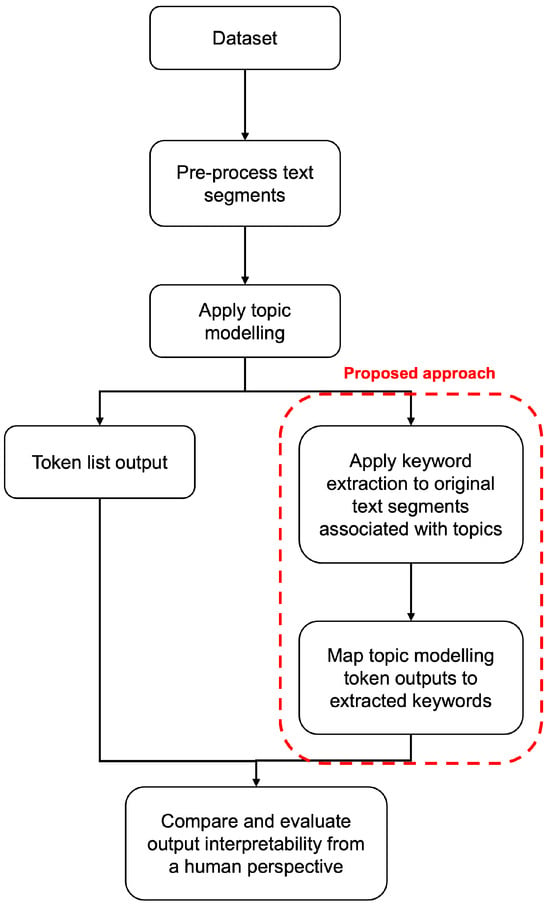

Topic modelling is a text mining technique for identifying salient themes from a number of documents. The output is commonly a set of topics consisting of isolated tokens that often co-occur in such documents. Manual effort is often associated with interpreting a topic’s

[...] Read more.

Topic modelling is a text mining technique for identifying salient themes from a number of documents. The output is commonly a set of topics consisting of isolated tokens that often co-occur in such documents. Manual effort is often associated with interpreting a topic’s description from such tokens. However, from a human’s perspective, such outputs may not adequately provide enough information to infer the meaning of the topics; thus, their interpretability is often inaccurately understood. Although several studies have attempted to automatically extend topic descriptions as a means of enhancing the interpretation of topic models, they rely on external language sources that may become unavailable, must be kept up to date to generate relevant results, and present privacy issues when training on or processing data. This paper presents a novel approach towards extending the output of traditional topic modelling methods beyond a list of isolated tokens. This approach removes the dependence on external sources by using the textual data themselves by extracting high-scoring keywords and mapping them to the topic model’s token outputs. To compare how the proposed method benchmarks against the state of the art, a comparative analysis against results produced by Large Language Models (LLMs) is presented. Such results report that the proposed method resonates with the thematic coverage found in LLMs and often surpasses such models by bridging the gap between broad thematic elements and granular details. In addition, to demonstrate and reinforce the generalisation of the proposed method, the approach was further evaluated using two other topic modelling methods as the underlying models and when using a heterogeneous unseen dataset. To measure the interpretability of the proposed outputs against those of the traditional topic modelling approach, independent annotators manually scored each output based on their quality and usefulness as well as the efficiency of the annotation task. The proposed approach demonstrated higher quality and usefulness, as well as higher efficiency in the annotation task, in comparison to the outputs of a traditional topic modelling method, demonstrating an increase in their interpretability.

Full article

(This article belongs to the Special Issue Advances in Natural Language Processing and Text Mining)

►▼

Show Figures

Figure 1

Open AccessArticle

Knowledge-Enhanced Prompt Learning for Few-Shot Text Classification

by

Jinshuo Liu and Lu Yang

Big Data Cogn. Comput. 2024, 8(4), 43; https://doi.org/10.3390/bdcc8040043 - 18 Apr 2024

Abstract

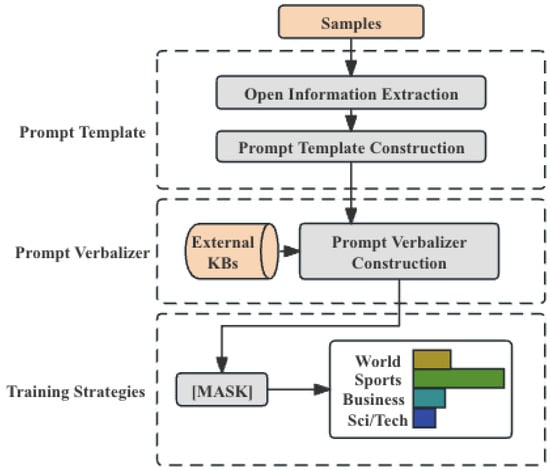

Classification methods based on fine-tuning pre-trained language models often require a large number of labeled samples; therefore, few-shot text classification has attracted considerable attention. Prompt learning is an effective method for addressing few-shot text classification tasks in low-resource settings. The essence of prompt

[...] Read more.

Classification methods based on fine-tuning pre-trained language models often require a large number of labeled samples; therefore, few-shot text classification has attracted considerable attention. Prompt learning is an effective method for addressing few-shot text classification tasks in low-resource settings. The essence of prompt tuning is to insert tokens into the input, thereby converting a text classification task into a masked language modeling problem. However, constructing appropriate prompt templates and verbalizers remains challenging, as manual prompts often require expert knowledge, while auto-constructing prompts is time-consuming. In addition, the extensive knowledge contained in entities and relations should not be ignored. To address these issues, we propose a structured knowledge prompt tuning (SKPT) method, which is a knowledge-enhanced prompt tuning approach. Specifically, SKPT includes three components: prompt template, prompt verbalizer, and training strategies. First, we insert virtual tokens into the prompt template based on open triples to introduce external knowledge. Second, we use an improved knowledgeable verbalizer to expand and filter the label words. Finally, we use structured knowledge constraints during the training phase to optimize the model. Through extensive experiments on few-shot text classification tasks with different settings, the effectiveness of our model has been demonstrated.

Full article

(This article belongs to the Special Issue Artificial Intelligence and Natural Language Processing)

►▼

Show Figures

Figure 1

Open AccessReview

Autonomous Vehicles: Evolution of Artificial Intelligence and the Current Industry Landscape

by

Divya Garikapati and Sneha Sudhir Shetiya

Big Data Cogn. Comput. 2024, 8(4), 42; https://doi.org/10.3390/bdcc8040042 - 7 Apr 2024

Abstract

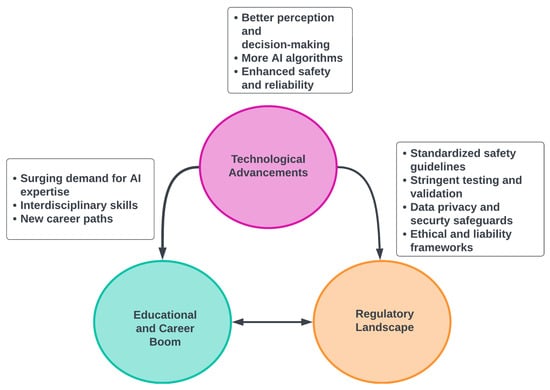

The advent of autonomous vehicles has heralded a transformative era in transportation, reshaping the landscape of mobility through cutting-edge technologies. Central to this evolution is the integration of artificial intelligence (AI), propelling vehicles into realms of unprecedented autonomy. Commencing with an overview of

[...] Read more.

The advent of autonomous vehicles has heralded a transformative era in transportation, reshaping the landscape of mobility through cutting-edge technologies. Central to this evolution is the integration of artificial intelligence (AI), propelling vehicles into realms of unprecedented autonomy. Commencing with an overview of the current industry landscape with respect to Operational Design Domain (ODD), this paper delves into the fundamental role of AI in shaping the autonomous decision-making capabilities of vehicles. It elucidates the steps involved in the AI-powered development life cycle in vehicles, addressing various challenges such as safety, security, privacy, and ethical considerations in AI-driven software development for autonomous vehicles. The study presents statistical insights into the usage and types of AI algorithms over the years, showcasing the evolving research landscape within the automotive industry. Furthermore, the paper highlights the pivotal role of parameters in refining algorithms for both trucks and cars, facilitating vehicles to adapt, learn, and improve performance over time. It concludes by outlining different levels of autonomy, elucidating the nuanced usage of AI algorithms, and discussing the automation of key tasks and the software package size at each level. Overall, the paper provides a comprehensive analysis of the current industry landscape, focusing on several critical aspects.

Full article

(This article belongs to the Special Issue Deep Network Learning and Its Applications)

►▼

Show Figures

Figure 1

Open AccessArticle

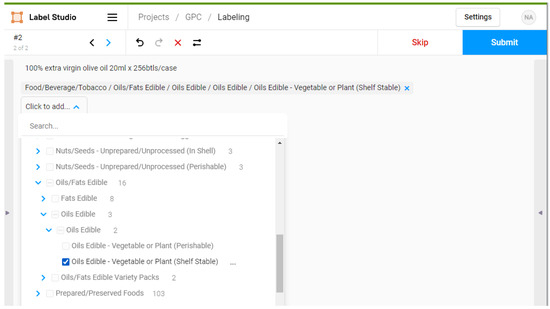

Data Sorting Influence on Short Text Manual Labeling Quality for Hierarchical Classification

by

Olga Narushynska, Vasyl Teslyuk, Anastasiya Doroshenko and Maksym Arzubov

Big Data Cogn. Comput. 2024, 8(4), 41; https://doi.org/10.3390/bdcc8040041 - 7 Apr 2024

Abstract

The precise categorization of brief texts holds significant importance in various applications within the ever-changing realm of artificial intelligence (AI) and natural language processing (NLP). Short texts are everywhere in the digital world, from social media updates to customer reviews and feedback. Nevertheless,

[...] Read more.

The precise categorization of brief texts holds significant importance in various applications within the ever-changing realm of artificial intelligence (AI) and natural language processing (NLP). Short texts are everywhere in the digital world, from social media updates to customer reviews and feedback. Nevertheless, short texts’ limited length and context pose unique challenges for accurate classification. This research article delves into the influence of data sorting methods on the quality of manual labeling in hierarchical classification, with a particular focus on short texts. The study is set against the backdrop of the increasing reliance on manual labeling in AI and NLP, highlighting its significance in the accuracy of hierarchical text classification. Methodologically, the study integrates AI, notably zero-shot learning, with human annotation processes to examine the efficacy of various data-sorting strategies. The results demonstrate how different sorting approaches impact the accuracy and consistency of manual labeling, a critical aspect of creating high-quality datasets for NLP applications. The study’s findings reveal a significant time efficiency improvement in terms of labeling, where ordered manual labeling required 760 min per 1000 samples, compared to 800 min for traditional manual labeling, illustrating the practical benefits of optimized data sorting strategies. Comparatively, ordered manual labeling achieved the highest mean accuracy rates across all hierarchical levels, with figures reaching up to 99% for segments, 95% for families, 92% for classes, and 90% for bricks, underscoring the efficiency of structured data sorting. It offers valuable insights and practical guidelines for improving labeling quality in hierarchical classification tasks, thereby advancing the precision of text analysis in AI-driven research. This abstract encapsulates the article’s background, methods, results, and conclusions, providing a comprehensive yet succinct study overview.

Full article

(This article belongs to the Special Issue Natural Language Processing and Event Extraction for Big Data)

►▼

Show Figures

Figure 1

Open AccessArticle

Generating Synthetic Sperm Whale Voice Data Using StyleGAN2-ADA

by

Ekaterina Kopets, Tatiana Shpilevaya, Oleg Vasilchenko, Artur Karimov and Denis Butusov

Big Data Cogn. Comput. 2024, 8(4), 40; https://doi.org/10.3390/bdcc8040040 - 3 Apr 2024

Abstract

►▼

Show Figures

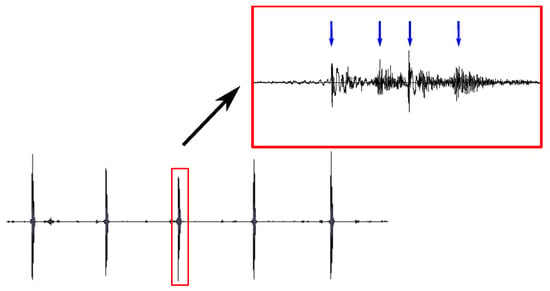

The application of deep learning neural networks enables the processing of extensive volumes of data and often requires dense datasets. In certain domains, researchers encounter challenges related to the scarcity of training data, particularly in marine biology. In addition, many sounds produced by

[...] Read more.

The application of deep learning neural networks enables the processing of extensive volumes of data and often requires dense datasets. In certain domains, researchers encounter challenges related to the scarcity of training data, particularly in marine biology. In addition, many sounds produced by sea mammals are of interest in technical applications, e.g., underwater communication or sonar construction. Thus, generating synthetic biological sounds is an important task for understanding and studying the behavior of various animal species, especially large sea mammals, which demonstrate complex social behavior and can use hydrolocation to navigate underwater. This study is devoted to generating sperm whale vocalizations using a limited sperm whale click dataset. Our approach utilizes an augmentation technique predicated on the transformation of audio sample spectrograms, followed by the employment of the generative adversarial network StyleGAN2-ADA to generate new audio data. The results show that using the chosen augmentation method, namely mixing along the time axis, makes it possible to create fairly similar clicks of sperm whales with a maximum deviation of 2%. The generation of new clicks was reproduced on datasets using selected augmentation approaches with two neural networks: StyleGAN2-ADA and WaveGan. StyleGAN2-ADA, trained on an augmented dataset using the axis mixing approach, showed better results compared to WaveGAN.

Full article

Figure 1

Open AccessArticle

Automating Feature Extraction from Entity-Relation Models: Experimental Evaluation of Machine Learning Methods for Relational Learning

by

Boris Stanoev, Goran Mitrov, Andrea Kulakov, Georgina Mirceva, Petre Lameski and Eftim Zdravevski

Big Data Cogn. Comput. 2024, 8(4), 39; https://doi.org/10.3390/bdcc8040039 - 1 Apr 2024

Abstract

With the exponential growth of data, extracting actionable insights becomes resource-intensive. In many organizations, normalized relational databases store a significant portion of this data, where tables are interconnected through some relations. This paper explores relational learning, which involves joining and merging database tables,

[...] Read more.

With the exponential growth of data, extracting actionable insights becomes resource-intensive. In many organizations, normalized relational databases store a significant portion of this data, where tables are interconnected through some relations. This paper explores relational learning, which involves joining and merging database tables, often normalized in the third normal form. The subsequent processing includes extracting features and utilizing them in machine learning (ML) models. In this paper, we experiment with the propositionalization algorithm (i.e., Wordification) for feature engineering. Next, we compare the algorithms PropDRM and PropStar, which are designed explicitly for multi-relational data mining, to traditional machine learning algorithms. Based on the performed experiments, we concluded that Gradient Boost, compared to PropDRM, achieves similar performance (F1 score, accuracy, and AUC) on multiple datasets. PropStar consistently underperformed on some datasets while being comparable to the other algorithms on others. In summary, the propositionalization algorithm for feature extraction makes it feasible to apply traditional ML algorithms for relational learning directly. In contrast, approaches tailored specifically for relational learning still face challenges in scalability, interpretability, and efficiency. These findings have a practical impact that can help speed up the adoption of machine learning in business contexts where data is stored in relational format without requiring domain-specific feature extraction.

Full article

(This article belongs to the Special Issue Machine Learning in Data Mining for Knowledge Discovery)

►▼

Show Figures

Figure 1

Open AccessArticle

Comparing Hierarchical Approaches to Enhance Supervised Emotive Text Classification

by

Lowri Williams, Eirini Anthi and Pete Burnap

Big Data Cogn. Comput. 2024, 8(4), 38; https://doi.org/10.3390/bdcc8040038 - 29 Mar 2024

Abstract

The performance of emotive text classification using affective hierarchical schemes (e.g., WordNet-Affect) is often evaluated using the same traditional measures used to evaluate the performance of when a finite set of isolated classes are used. However, applying such measures means the full characteristics

[...] Read more.

The performance of emotive text classification using affective hierarchical schemes (e.g., WordNet-Affect) is often evaluated using the same traditional measures used to evaluate the performance of when a finite set of isolated classes are used. However, applying such measures means the full characteristics and structure of the emotive hierarchical scheme are not considered. Thus, the overall performance of emotive text classification using emotion hierarchical schemes is often inaccurately reported and may lead to ineffective information retrieval and decision making. This paper provides a comparative investigation into how methods used in hierarchical classification problems in other domains, which extend traditional evaluation metrics to consider the characteristics of the hierarchical classification scheme, can be applied and subsequently improve the classification of emotive texts. This study investigates the classification performance of three widely used classifiers, Naive Bayes, J48 Decision Tree, and SVM, following the application of the aforementioned methods. The results demonstrated that all the methods improved the emotion classification. However, the most notable improvement was recorded when a depth-based method was applied to both the testing and validation data, where the precision, recall, and F1-score were significantly improved by around 70 percentage points for each classifier.

Full article

(This article belongs to the Special Issue Advances in Natural Language Processing and Text Mining)

►▼

Show Figures

Figure 1

Journal Menu

► ▼ Journal Menu-

- BDCC Home

- Aims & Scope

- Editorial Board

- Reviewer Board

- Topical Advisory Panel

- Instructions for Authors

- Special Issues

- Topics

- Topical Collections

- Article Processing Charge

- Indexing & Archiving

- Editor’s Choice Articles

- Most Cited & Viewed

- Journal Statistics

- Journal History

- Journal Awards

- Editorial Office

Journal Browser

► ▼ Journal BrowserHighly Accessed Articles

Latest Books

E-Mail Alert

News

Topics

Topic in

Algorithms, BDCC, BioMedInformatics, Information, Mathematics

Machine Learning Empowered Drug Screen

Topic Editors: Teng Zhou, Jiaqi Wang, Youyi SongDeadline: 31 August 2024

Topic in

BDCC, Entropy, Information, MCA, Mathematics

New Advances in Granular Computing and Data Mining

Topic Editors: Xibei Yang, Bin Xie, Pingxin Wang, Hengrong JuDeadline: 30 October 2024

Topic in

Electronics, Applied Sciences, BDCC, Mathematics, Chips

Theory and Applications of High Performance Computing

Topic Editors: Pavel Lyakhov, Maxim DeryabinDeadline: 30 November 2024

Topic in

BDCC, Digital, Information, Mathematics, Systems

Data-Driven Group Decision-Making

Topic Editors: Shaojian Qu, Ying Ji, M. Faisal NadeemDeadline: 31 December 2024

Conferences

Special Issues

Special Issue in

BDCC

Predictive Performance-Explainability Duality for Big Data Analytics-Powered Healthcare

Guest Editors: Luca Parisi, Mansour Youseffi, Renfei MaDeadline: 21 June 2024

Special Issue in

BDCC

Machine Learning in Data Mining for Knowledge Discovery

Guest Editors: Cong Gao, Chuntao DingDeadline: 30 June 2024

Special Issue in

BDCC

Human Factor in Information Systems Development and Management

Guest Editors: Paweł Weichbroth, Jolanta Kowal, Mieczysław Lech OwocDeadline: 31 July 2024

Special Issue in

BDCC

Machine Learning and AI Technology for Sustainable Development

Guest Editors: Wei-Chen Wu, Jason C. Hung, Yuchih Wei, Jui-hung KaoDeadline: 13 August 2024

Topical Collections

Topical Collection in

BDCC

Machine Learning and Artificial Intelligence for Health Applications on Social Networks

Collection Editor: Carmela Comito